🇪🇸 Hola!, bienvenido a mi rincon de pensar del Internet, donde intento organizar pensamientos, proyectos o alguna que otra música.

subscribe via RSS

-

Incorrect DNS redirect configuration: My domain’s IPV6 was not pointing to the correct IP from DO, this meant the Certbot challenges were failing

-

Utilizing the Certbot web-root plugin: Overcoming Let’s Encrypt challenges involved using the web-root plugin, with specific configuration for the challenge location pointing to the directory created by Certbot.

- Nginx reverse proxy config: My Nginx is another container in the same system so I had to create volume to expose the certificates created by certbot to it. The folder wew certbot may vary. I exposed the whole thing to be able to navigate through everything. My new volume looked like this:

- Tener bien instalado el driver CH340el driver CH340 para que el USB de ESP32 sea reconocido como un puerto serie mas.

- usar Chrome

- seguir los pasos en https://install.wled.me/ (seleccionar el COM del ESP y dependiendo de la placa ESP tener presionado el botón de BOOT mientras se hace click en instalar)

- Basics on the high level networking implementation in Godot: Remote Procedure Calls

- Keeping clients and server synchronized, signaling back and forth

- Have a lobby stage where game starts and can be sychronized

- Have the server deal with collisions as a source of truth and inform clients

- Tilemaps for creating 2D levels

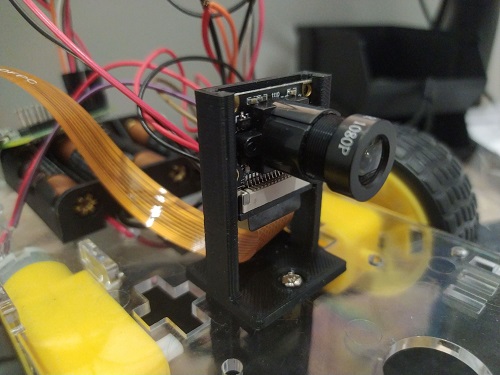

- 2WD Robot frame

- L9110 Dual-Channel H-Bridge

- Raspberry Pi Zero (Raspbian Lite in an SD card)

- TP4056 boost circuit for USB charging: model 03962A

- LiPo battery 1200mAh

- Raspberry Camera module any will do as long is compatible

- FFC camera cable for Pi Zero Pi zero has a slightly smaller camera port so I had to buy this

- 4 AA Batteries. The Motors need more power than the Pi so battery pack will be needed

- Instead o ahving to ports and channels, put data and image in the same WebRTC channel

- A 3d printed case to hide everything a make it look nicer.

- Add a couple of servos to pan an tilt the camera.

- Plug a mic and a speaker to have two way audio coms!

-

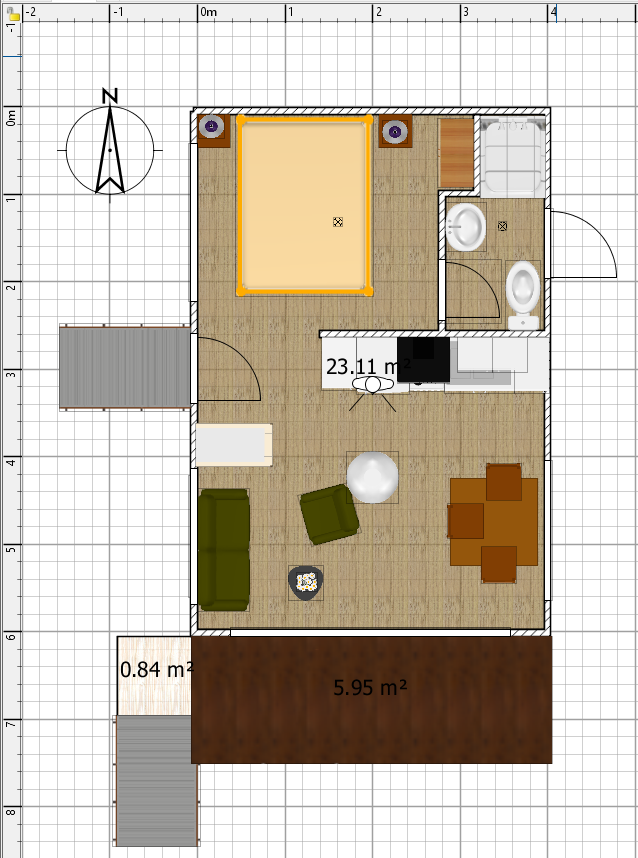

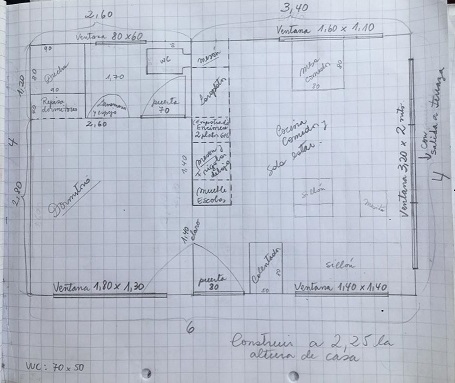

The cabin will be placed in a slope, there’s no terrain options so the design cannot be placed in a slope. I saw workarounds for this in youtube that did not quite convince me as solutions. I decided to just elevate the whole thing and use some high foundings to represent this fact.

-

The original design included some windows types that the built-in models did not include, some can be found in the models section of the same Sweet Home 3D website but not in my case.

-

As a consequence of the previous point, the truth is the amount of models can seem somewhat reduced.

-

For the roof I did import a model from the official website.

-

Some default cloud background can be added but not pictures.

- Setup Slash command to send a call with the desired text as payload

- Lambda Function to deal with the request

- Amazon SNS messaging system to publish the requests

- A lambda function that processes the image and sends back te response to Slack

- API Gateway receives request from Slack and proxies it to proxyLambda

- proxyLambda publishes a message to SNS with the request data and answers to Slack ASAP

- subscriberLambda gets trigger on the new message and processes the message, generating the image

- Call out web service.

- Call response to Slack.

- Set a trigger for once-a-week execution

-

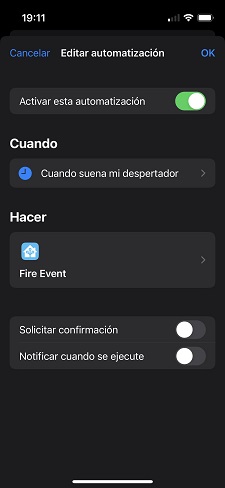

This one will help us set up an event.

-

The next one will show us how to make the event a CRON expressions.

- Go to Source Tree.

- Check the files that changed between current and the last TAG.

- Copy these files into AWS S3

- Invalidate Cloudfire cache (Amazon’s CDN… sort of)

- Use GIT diff to get the changed files (if we are in a TAG or not changes the behaviour)

- AWS CLI to send the specific files

- AWS CLI to invalidate cache programatically

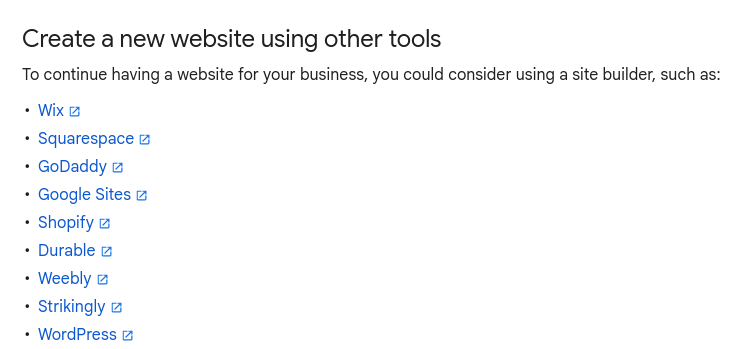

Migrating before Google Business websites shutdown Permalink

So, once again, Google is saying goodbye to another service. Websites attached to the businesses profile you can create from Google Maps map points

My parents have a rental cabin by the lake, and my mom took advantage of this easy service of google, where you can quickly create a website so it gets linked in the Google Maps and well, it also worked as a standalone website for all intents and purposes.

These pasts few weeks I’ve been reading a lot about small/indie web and how companies do take most of our data with them when they shut down. A particularly sad website I came across it’s the google graveyard which lists all companies Google has killed.

They received a notice from google that they are shuting down these pages soon and gave no other option than just creating a new one form services like Wix. Which is not at all good nor an easy solution. https://support.google.com/business/answer/14368911?hl=en

Since my mom put effort on creating this website, I wanted to save it somehow, so I thought, since it’s basically static website with some dynamic comments, to create a local copy with wget and host it on my VPS under a new domain name I bought for the occasion: https://lagoyluz.com

wget --adjust-extension -H -k -K -p https://lagoyluz.negocio.site

I found these parameters to work best to download also some resources that were needed like CSS and Fonts, and some images. You can check the wget docs for the details on each parameter.

The cloned index.html itself was absolutely bloated with google’s javascript tracking code and it was completely unfriendly. I made a first pass of removing some scripting I thought not necessary for my clone, and manage to cut it down to a still not very nice 3500 lines of a lot of garbage. Anyway, everything was displayed and working except for the navigation menu and its links, which I did not investigate why in the cloned version did not worked so I just added my own Javascript to cover links and anchor functionality

I copied to a static folder behind my nginx reverse proxy and created a certificate for it with Let’s encrypt (which gave me some trouble cause I forgot again how the acme challenges worked). And after a few hours I had my own version of the website working under a new domain with SSL

Regarding the dynamic content, I will have to think in some backend or static build for my parent to be able to push new reviews they want to share and change the photographs

notes

Now more than ever, I am feeling strong about walled gardens and the impact of trusting them with your information, work and time, just to be let down once they decide to shutdown x or y service for no reason other than not making billions a year. It is true, though, that an effort is required to make this process of self hosting or domain name driven identity more technically affordable. We need more services like the ones being developed by the Small Tech Foundation https://small-tech.org/, and go even further with high level services where spawning you digital identity with your own domain at home is a matter of a few clicks, just as easy as getting a new phone number.

related links

https://boffosocko.com/2017/07/28/an-introduction-to-the-indieweb/

https://blog.morettigiuseppe.com/articles.html From when I helped to render the cabin’s blueprints

TV backlight with LED strip and ESPHOME Permalink

This project essentially follows the same approach as my previous Bed Lights project, utilizing the ESP32 and ESPHome, along with the same LED strip.

However, in this iteration, I introduced some automations. Apart from controlling the light in Home Assistant, I also integrated Chromecast with the TV. This integration allows me to determine whether the TV is on and which application is currently in use.

With this information, I implemented a couple of automations to adjust the light when the TV is in playback mode. Specifically, I dim the light when the TV is in playback, except for Spotify where the light effects differ.

Here are the details of the automations in YAML format:

alias: TV Light reacts to playback in TV

description: ""

trigger:

- platform: device

device_id: 43254314315431

domain: media_player

entity_id: 543265435432

type: playing

enabled: false

- platform: state

entity_id:

- media_player.livingroom_tv

attribute: app_name

from: com.google.android.apps.tv.launcherx

condition: []

action:

- if:

- condition: state

entity_id: media_player.livingroom_tv

attribute: app_name

state: com.spotify.tv.android

then:

- service: scene.turn_on

target:

entity_id: scene.chill

metadata: {}

else:

- service: scene.turn_on

target:

entity_id: scene.watching_tv

metadata: {}

mode: single

This YAML configuration was generated using the GUI to set up the automation. Two scenes with predefined light configurations were created, specifying color and brightness options. This allows for easy modification of the scenes without affecting the automation.

That’s all for today!

Trying out VFX with Blender Permalink

I wanted to try adding 3d stuff to my drone footage in Lago Ranco, Chile. So it was a great opportunity to learn some basics on the VFX tools in Blender. I followed two tutorials for this.

Here is the result. There are several take aways. First I need to learn proper lighting, I should learn how to match the actual shadows in the footage.

I couldn’t get a good material for the STONE. I would like the stone material to be similar as the mountain. As if it was extracted from it.

But is a start.

I'm taking care of my plants years old Permalink

This Monstera plant was a friend’s gift and it has been living in the floor a long time. Let’s overengineer a fix for this, that at least takes 10 times more time than go buy a stool in the shop next door.

It uses a 3d platform piece and three 2cm diameter 40cm long wooden sticks and I glued all together because it was faster.

The STL file it’s here. Or in the Thingiverse page

Media Server with Jellyfin with remote power up Permalink

If you’re like me, with a desktop PC that isn’t always online but houses your media server powered by Jellyfin, you’ve probably faced the challenge of waking it up quickly when needed. Jellyfin is an open-source media server that allows you to manage and stream your media collection effortlessly.

I initially experimented with the traditional magic packet approach, only to find that my motherboard didn’t fully support it, and reliability was a bit of an issue.

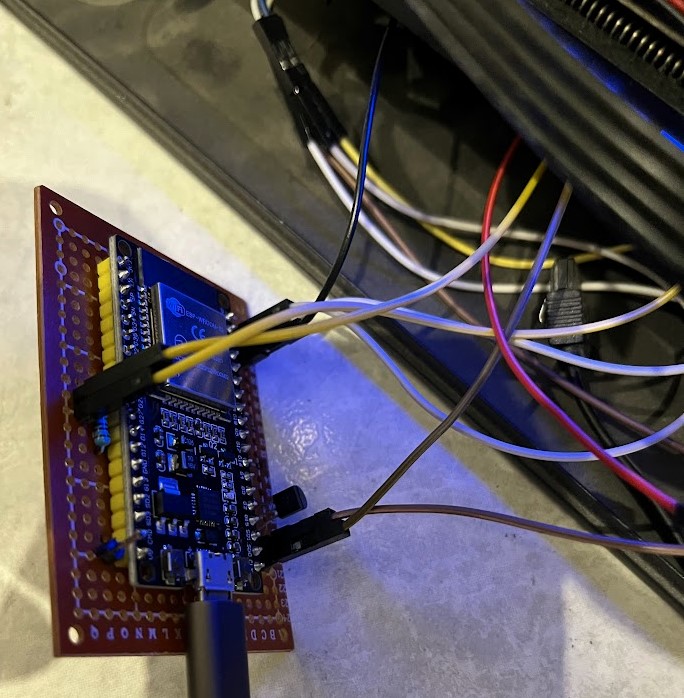

Enter the ESP32, a versatile microcontroller I’ve used in other projects, and ESPHOME. The ESP32 is a powerful and flexible microcontroller widely used in the maker community. ESPHOME is a framework for creating custom firmwares for ESP8266/ESP32 boards. After stumbling upon this project from Erriez, I discovered a solution where an ESP32 could intercept connections for the power and reset buttons of the desktop PC.

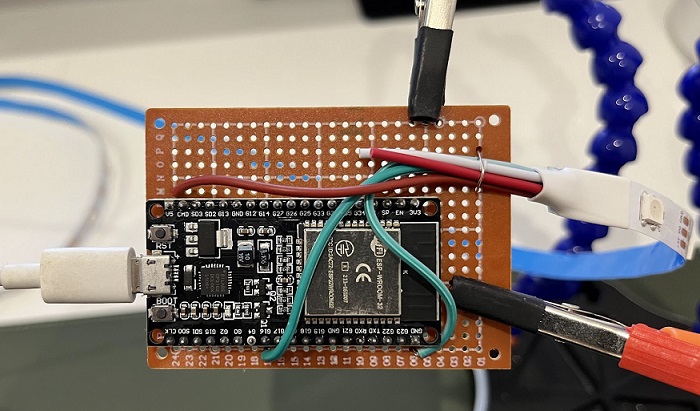

My initial prototype, though in a rather “temporary fashion,” successfully allowed me to power up the PC. You can find the details on how to build the circuit in the GitHub project page.

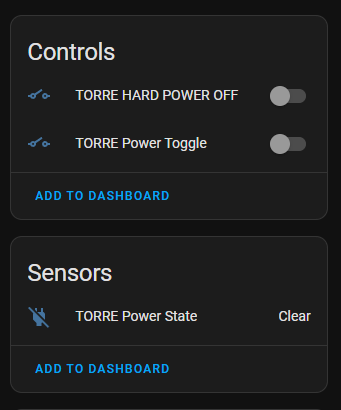

This is how the device turns out in HA.

However, reading the reset pin to determine the computer’s status proved to be a bit trickier than expected. Undeterred, I sought an alternative solution and found a Home Assistant companion app for Windows. This app, coupled with Mosquitto MQTT, allowed me to create a sensor in Home Assistant that could accurately report whether the computer was sleeping, off, or available. This way, I could make informed decisions about when to press the power button remotely before diving into a Jellyfin binge.

As I explored further, I discovered additional possibilities for improvement. Leveraging Google TV integration, which provides a sensor indicating the currently opened application, I could refine my setup. By identifying when Jellyfin was in use, I could automatically check if the computer needed powering up, avoided the need to wake up the computer myself.

If you’re facing a similar challenge or love tinkering with home automation, give this approach a try.

ESP32 ftw

chao!

Wordpress, Nginx Reverse Proxy and Certbot Permalink

*this is what AI thinks of my problem

*this is what AI thinks of my problem

Welcome, everyone! In this post, I’ll walk you through the process of setting up a Wordpress installation behind Docker, under a NGINX reverse proxy (to route different domains to different services), and Certbot to secure with SSL the site. Throughout this journey, I encountered and and somewhat successfully addressed various challenges.

Introduction

To provide a bit of context, I manage a Virtual Private Server (VPS) on Digital Ocean. On this VPS, I have Docker installed, and I utilize an NGINX reverse proxy to serve not only this Wordpress installation but also my personal website and other services I need. My personal domain is pointing to this VPS, creating a cohesive and centralized environment for all my online endeavors.

Configuring Docker and WordPress

I kicked off the process by installing WordPress through Docker. I have all my docker configs organized in folders depending on the service. My docker compose file for all Wordpress needs looks like this:

version: '3.4'

services:

database:

image: mysql:5.7

command:

- "--character-set-server=utf8"

- "--collation-server=utf8_unicode_ci"

ports:

- "3306:3306" # (*)

restart: on-failure:5

environment:

MYSQL_USER: wordpress

MYSQL_DATABASE: wordpress

MYSQL_PASSWORD: wordpress

MYSQL_ROOT_PASSWORD: wordpress

wordpress:

depends_on:

- database

image: wordpress:latest

ports:

- "8008:80" # (*)

restart: on-failure:5

volumes:

- ./public:/var/www/html

environment:

WORDPRESS_DB_HOST: database:3306

WORDPRESS_DB_PASSWORD: wordpress

WORDPRESS_DB_USER: wordpress

WORDPRESS_DB_NAME: wordpress

phpmyadmin:

depends_on:

- database

image: phpmyadmin/phpmyadmin

ports:

- 8084:80 # (*)

restart: on-failure:5

environment:

PMA_HOST: database

wordmove:

tty: true

depends_on:

- wordpress

image: mfuezesi/wordmove

restart: on-failure:5

container_name: luablu_wordmove # (+)

volumes:

- ./config:/home

- ./public:/var/www/html

- ~/.ssh:/root/.ssh # (!)

networks:

default:

external:

name: nginx-reverse_default

The most important part of this config is define the network and make all defined services belong to that network.

Configuring NGINX

I had to be able to route the new domain to wordpress and I wasn’t able to find the host and port that contained the wordpress intallation. I ended up with this config.

server {

server_name domain.com;

listen 80 ;

# Do not HTTPS redirect Let'sEncrypt ACME challenge

location /.well-known/acme-challenge/ {

root /home/static-websites/letsencrypt;

auth_basic off;

allow all;

try_files $uri =404;

break;

}

location / {

return 301 https://$server_name$request_uri;

}

}

server {

server_name domain.com;

listen 443 ssl http2 ;

ssl_session_timeout 5m;

ssl_session_cache shared:SSL:50m;

ssl_session_tickets off;

ssl_certificate /etc/nginx/letsencrypt/live/domain.com/fullchain.pem;

ssl_certificate_key /etc/nginx/letsencrypt/live/domain.com/privkey.pem;

add_header Strict-Transport-Security "max-age=31536000" always;

location / {

proxy_pass http://wordpress:80/;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}

The acme challenge will be relevant for later CERTBOT config. the firts block redirects to HTTPS. For now the important part is the proxy_pass. Which is pointing to the CONTAINER name and the exposed port. I actually didnt know you could use container’s name to point to it. Non of Ips or other known host names worked for me.

With these adjustments, I achieved accessibility to the WordPress site from any external location.

SSL Certificate Configuration with Certbot

Moving on, I installed Certbot (from official docs)[https://certbot.eff.org/instructions?ws=other&os=ubuntufocal] on my Digital Ocean VPS to obtain an SSL certificate. This step presented 2 main hurdles:

Successfully navigating these challenges, I generated the SSL certificates necessary to enhance the security of the site.

- /etc/letsencrypt/:/etc/nginx/letsencrypt

Automating the Renewal Process

Recognizing that security extends beyond the initial certificate installation, I created a script that automates the renewal process. This script accesses the container and triggers a reload to apply the new certificates. This automation is seamlessly integrated into the Certbot renewal process, executed as a convenient hook.

To streamline the entire process, I added the certbot renewal command with the hook to a cronjob on my Digital Ocean VPS. This ensures that the certificates are renewed at regular intervals, maintaining the site’s security and constant accessibility.

Since the certificate are only loaded once at boot by Nginx, after the cert is regenerated we need to restart nginx. I did thig by attaching a POST RENEWAL script to certbot with the following content.

#!/bin/bash

docker exec -it nginx-proxy service nginx reload

nginx-proxy is the name of the container that has my nginx reverser proxy, and the rest the command to execute inside the machine. Now to include the trigger we execute like this:

certbot renew --deploy-hook /root/scripts/letsencrypt/post-deploy-certbot-renewal.sh

Make sure you add the deploy hook for the auto renewal. It can be by placing it in the deploy hook folder inside etc/letsencrypt or defining it when you create the cert. When reniewing, certbot will remember.

Thats ALL!

Módulo ESP32 con camara en Home Assistant Permalink

Vamos a integrar un módulo ESP32 con cámara, sensor de movimiento y pantalla en mi instalación de Home Assistant. La clave para este proyecto fue la implementación de ESPHome, una plataforma que simplifica la integración de dispositivos ESP32 en Home Assistant.

El modulo que tenía guardado hace un tiempo es el TTGO: LILYGO® TTGO T-Camera ESP32 WROVER cámara 0,96 OLED. Importante es que la versión de mi placa es 1.6.2. Eso sera relevante a la hora de identificar los diferentes pines de los periféricos.

Problemas Encuentros y Soluciones

1. Problema con el Flasheo y Windows

Al principio, me encontré con un problema al intentar cargar el programa en el ESP32. Por algún motivo en MacOS era imposible hace la carga del programa en el ESP32. Quizás los drivers USB/Serial. La solución fue cambiar a Windows. Desde Windows pude cargar los binario desde ESPHome.

2. Cambios en los Pines de la Cámara

Experimenté dificultades con los pines de la cámara, ya que estaban ligeramente diferentes a los estándar. Afortunadamente, encontré ejemplos útiles que me ayudaron a solucionar este problema. El la sección de links “Configuración Funcional para TTGO ESP32 Camera Board” Uno de los comentarios propone una configuración de PINs que sirve para la placa 1.6.2

Configuración de ESPHome

La configuración la hice toda a través de las herramientas de ESPHome. ESPHome es una plataforma de código abierto diseñada para simplificar la integración de dispositivos basados en ESP8266 y ESP32 con el ecosistema Home Assistant. Permite la configuración fácil y la programación de dispositivos IoT utilizando un formato YAML sencillo, lo que facilita la creación de firmware personalizado para una variedad de sensores, actuadores y otros componentes electrónicos.

Me base en la configuración de los links de recursos, pero finalmente adapté la configuración inicial de espHome con la de los posts de referencia. También añadí la configuración para poder enviar mensajes a la pantalla a través de una cola de MQTT. Esto último no tiene mucha utilidad pero era mi primera vez interactuando con MQTT.

esphome:

name: camera-1

esp32:

board: esp32dev

framework:

type: arduino

# Example configuration entry

mqtt:

broker: homeassistant

username: mosquitto

password: 432fr354g543r

# Enable logging

logger:

# Enable Home Assistant API

api:

encryption:

key: "fdsfdsfds+cKCuURB6GbFf4="

ota:

password: "fdsfdsfdsfds"

wifi:

ssid: !secret wifi_ssid

password: !secret wifi_password

# Enable fallback hotspot (captive portal) in case wifi connection fails

ap:

ssid: "Camera-1 Fallback Hotspot"

password: "23243242d"

captive_portal:

# ttgo_camearv16 configuration

esp32_camera:

external_clock:

pin: GPIO4

frequency: 20MHz

i2c_pins:

sda: GPIO18

scl: GPIO23

data_pins: [GPIO34, GPIO13, GPIO14, GPIO35, GPIO39, GPIO38, GPIO37, GPIO36]

vsync_pin: GPIO5

href_pin: GPIO27

pixel_clock_pin: GPIO25

resolution: 640x480

jpeg_quality: 10

# Image settings

name: Camera 1

binary_sensor:

- platform: gpio

pin:

number: GPIO19

mode: INPUT

inverted: False

name: Camera 1 Motion

device_class: motion

filters:

- delayed_off: 1s

- platform: gpio

pin:

number: GPIO15

mode: INPUT_PULLUP

inverted: True

name: Camera 1 Button

filters:

- delayed_off: 50ms

- platform: status

name: Camera 1 Status

sensor:

- platform: wifi_signal

name: Camera 1 WiFi Signal

update_interval: 10s

- platform: uptime

name: Camera 1 Uptime

i2c:

sda: GPIO21

scl: GPIO22

# This file has to exists when compiling

font:

- file: "fonts/Roboto-Regular.ttf"

id: tnr1

size: 17

# Configuración para Mosquitto MQTT

text_sensor:

- platform: mqtt_subscribe

name: "Info for screen"

id: infocamera

topic: home/camera1_screen_text

display:

- platform: ssd1306_i2c

model: "SSD1306 128x64"

address: 0x3C

rotation: 180

lambda: |-

it.printf(0, 0, id(tnr1), "%s", id(infocamera).state.c_str());

Antes de cargar espHome emite una key que habrá que introducir después para integrar el dispositivo, se tendrá que guardar hasta entonces.

Integrando MQTT para Pantalla

Para aprovechar al máximo la pantalla, decidí utilizar MQTT para mostrar información relevante. Configuré manualmente la conexión con el servidor MQTT y agregué el componente necesario para la pantalla. MQTT tiene que estar instalado en Home Assistant. Se puede hacer a través del addon de Mosquitto. Se instala muy fácil. Luego solo queda definir un TOPIC donde se escriben y leen los mensajes de la cola. En mi caso home/camera1_screen_text. No es necesario que tenga esta estructura. Peru la estructura con / ayuda a organizar diferentes estancias.

Utilicé este pequeño script para probar mi configuración de Mosquitto que habia instalado en Home Assistant. Este script lo ejecuté desde otro ordenador conectado a la red.

import paho.mqtt.client as mqtt

def on_connect(client, userdata, flags, rc):

print("Connected with result code "+str(rc))

# Subscribing in on_connect() means that if we lose the connection and

# reconnect then subscriptions will be renewed.

client.subscribe("home/camera1_screen_text")

# The callback for when a PUBLISH message is received from the server.

def on_message(client, userdata, msg):

print(msg.topic+" "+str(msg.payload))

client = mqtt.Client()

client.on_connect = on_connect

client.on_message = on_message

client.username_pw_set("mosquitto", "6Y2TRQuNtjPHqEvC")

client.connect("homeassistant", 1883, 60)

# Blocking call that processes network traffic, dispatches callbacks and

# handles reconnecting.

# Other loop*() functions are available that give a threaded interface and a

# manual interface.

client.loop_forever()

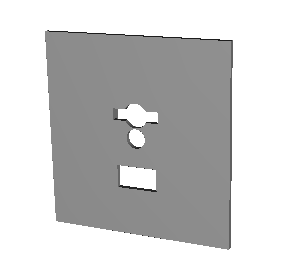

Carcasa impresa en 3D

He realizado una modificación en la carcasa para mi proyecto del termostato, inspirado en el artículo Control de Calefacción con Home Assistant. La nueva tapa tiene los agujeros para la cámara, pantalla, botones y sensor de movimiento.

A continuación, puedes ver la foto de la nueva tapa:

El nuevo modelo no tenía las medidas adecuadas por tanto la placa no se cabia en el marco, pero como referencia, aquí están los enlaces a los archivos STL:

Asi se vé ya instalada

Asi se vé ya instalada

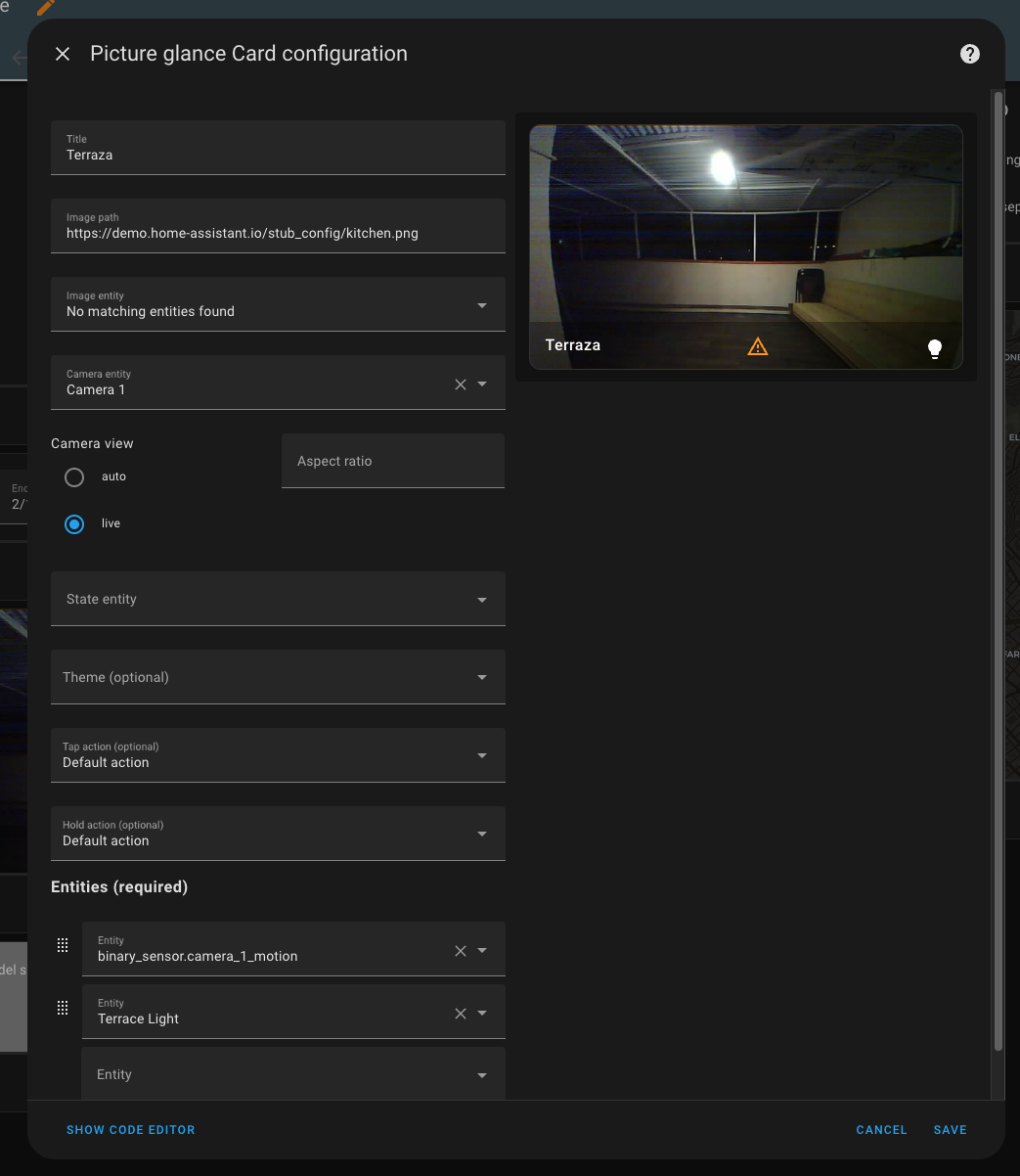

Configuración en Home Assistant

Aquí está la imagen de la configuración en HA, utilice una de las tarjetas predefinidas que pueden mostrar el video en la pantalla principal y ademas los botones para el sensor de movimiento y otras cosas que puedan haber en esa estancia (luces por ejemplo)

BONUS: Cámara autónoma

Es posible instalar un firmware que conecté la cámara a la red y asi poder acceder directamente a las imágenes. Se puede descargar desde “Documentación Original (YAML) - Importante para el Streaming” en los recursos de referencia.

Recursos Útiles

Luces de lectura con Home Assistant, ESP32 y tiras de LEDs Permalink

Vamos a esconder las luces de noche de la habitación detrás de la cama y controlarlas por Home Assistant. Aprovecharemos la integración con home assistant para sincronizar el encendido con la alarma del iPhone y para tener un botón rápido de encendido y apagado

Este es un proyecto muy sencillo ya que todo el software y configuraciones se hacen en via interfaz y se utilizan los mismos 5V por USB del ESP32 para alimentar las luces.

Componentes

Primero partes y componentes!

Raspberry Pi 4

Mi instalación de Home Assistant es en una raspberry, pero cualquier instalación serviría. Se pueden encontrar instrucciones en la web de Home Assistant para la instalación. (Esto probablemente sería lo más complicado de conseguir, hay falta de stock, aunque se supone debería volver en 2023)

ESP32

Cualquier ESP32 debería funcionar. Se puede comprobar en la página de ESPHome Los míos son de AliExpress. También se puede encontrar en amazon: https://amzn.to/3jewNUA

Tiras de led de 1M direccionables

En mi caso, estoy usando estas tiras de led. Lo importante es que sean tiras de leds WS2812B. Estas utilizan el tipo de direccionamiento compatible con el software que instalaremos en el ESP32

Configuración del ESP32

Vamos a instalar un WLED. Un firmware especial para controlar luces de este tipo y que tiene compatibilidad con home assistant integrada. Para esto basta con

Para conectar la placa a la tira de led necesitaremos 3 puntos. Un GPIO para controlar los leds, un pin de 5V y un GND. Por defecto el firmware admite conectar las luces en el puerto GPIO1 sin ninguna otra configuración.

De este paso hay muchos videos, por ejemplo: https://www.youtube.com/watch?v=TOEnFKLm9Sw

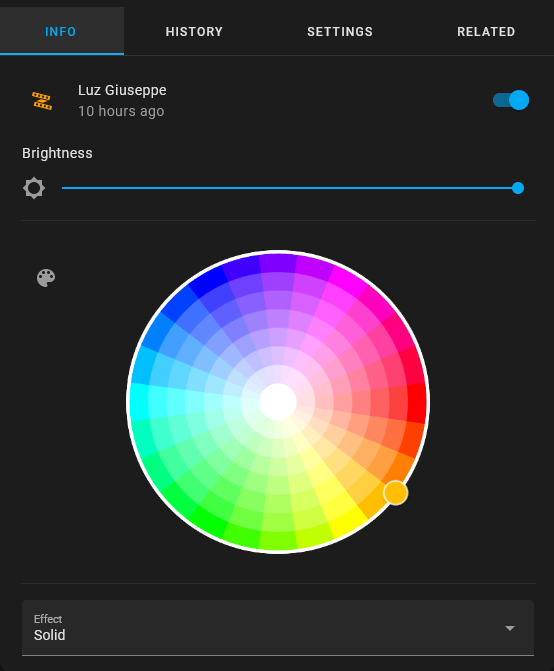

Configuración en Home Assistant

Una vez instalado el firmware y el ESP conectado, Home Assistant debería reconocer un dispositivo nuevo WLED que se puede configurar sin problemas y que dejara accesibles todas las entidades de la luces. Las que vamos a utilizar aquí, serán color y intensidad.

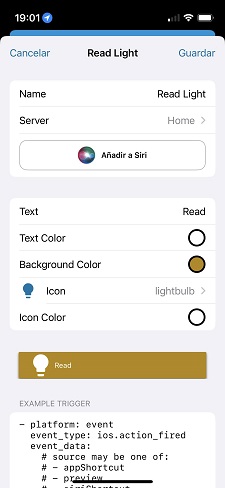

Crear botón de acceso rápido para encendido en iOS

La primera automatización que se necesita es la de apagado y encendido. Me decanté por hacerlo con el móvil, asi no tenía que diseñar y implementar el botón físico. La idea es la siguiente: La aplicación para Home Assistant permite implementar acciones en el teléfono que se traducen en EVENTOS que se pueden leer desde home assistant. Utilizaremos uno de estos eventos para indicar encendido/apagado. Luego, a traves del widget para iOS de HA se puede acceder el botón fácilmente en cualquier lugar.

Vamos paso a paso: En la Aplicación vamos a Settings -> Companion App -> Actions -> Add Aquí solo tenemos que poner un nombre y guardar para después el EXAMPLE TRIGGER al final

Luego nos vamos a Home Assistant a Settings -> Automations & Scenes y creamos una nueva automatización. Dentro de la automatización hacemos click en los tres puntos de la derecha para abrir el editor YAML y usamos lo siguiente:

alias: Bedside light

description: Bedside light description

trigger:

- platform: event

event_type: ios.action_fired

event_data:

actionID: your_action_id

actionName: Read Light

condition: []

action:

- if:

- condition: device

type: is_on

device_id: your_device_id

entity_id: light.wled

domain: light

then:

- type: turn_off

device_id: your_device_id

entity_id: light.wled

domain: light

else:

- type: turn_on

device_id: your_device_id

entity_id: light.wled

domain: light

enabled: true

brightness_pct: 20

mode: single

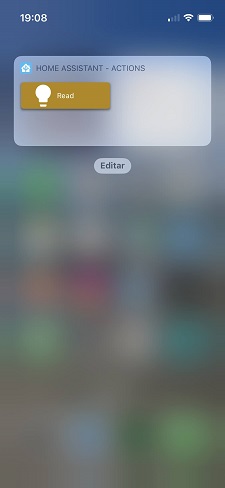

Remplazamos el actionID con el valor del trigger example en la action la companion app que vimos antes, y también el device y entity del dispositivo añadido en Home Assistant. Esta misma configuración se puede conseguir igualmente solo con las opciones de la UI. Después solo queda añadir el widget de Home Assistant con las acciones y deberíamos tener algo como esto:

Sincronizar encendido con la alarma de iOS

Para esto utilizaremos los Atajos de iOS, que nos permiten ejecutar una acción cuando se activa la alarma del móvil. La acción será enviar un evento a Home Assistant. Y de la misma manera que antes la utilizaremos para encender la luz a brillo máximo. El nombre de la acción no es relevante, mientras sea el mismo que leamos desde HA

Caja impresa en 3D

Para encapsular el circuito diseñé una caja simple con cierre por imanes.

Siguiente pasos

Como mejora, me gustaría añadir un botón manual de encendido en la cama. De este modo es mas fácil encender y apagar durante la noche si ver el móvil. EL firmware de WLED deja automáticamente el PIN GPIO0 como switch, si se conecta con tierra se apaga y enciende la luz, osea sería cuestión solo de diseñar el botón, sin requerir nada de esto software.

Otra mejora seria introducir un sensor de movimiento para en la noche al levantarse active dos o tres sensores de abajo para iluminar un poco el camino.

Control de caldera con Home Assistant y ESP32 Permalink

La solución más fácil hubiera sido un Nest, pero bueno, que hay de divertido en eso.

Advertencia: Algunas conexiones trabajan a 220V, si no estás seguro de lo que haces mejor consultar un profesional.

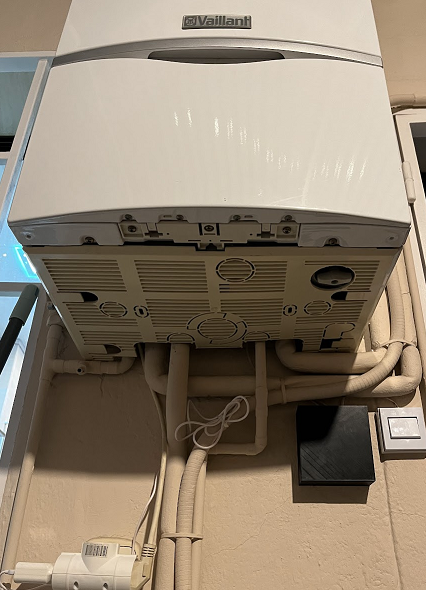

Instalación original

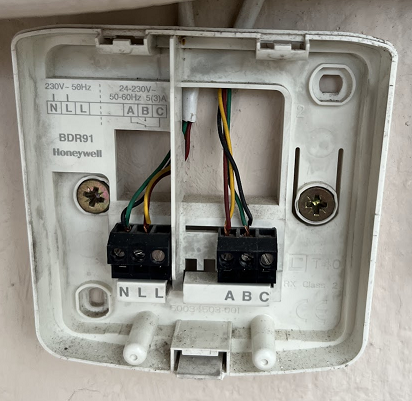

La instalación de mi caldera actual tenía un termostato honeywell inalámbrico, que después de inspeccionarlo un poco solo tenía un par de relés. Parecía que accionaba la caldera con solo un interruptor.

Es un módulo termostato que se posiciona dentro de casa y manda la señal RF para activar el relé.

Identificando el cableado

De la caldera bajaban dos pares de cables, uno de alimentación de 220v para alimentar el módulo inalámbrico y otro a modo de interruptor para activar y desactivar la caldera. [foto cableado y caldera]

A partir de videos y información de tipos de cableado y caldera se puede identificar que el A B es el cableado para poner en marcha la caldera. O sea, los cables A B los tenemos que poner en nuestro propio relé. Los cables NL son 220V de línea que alimentan el módulo inalámbrico, que en mi caso no he usado porque la ESP32 se alimenta por USB a un enchufe. Se podría haber puesto una placa transformadora, pero la verdad prefería no meterme a transformar esos voltajes.

Instalación nueva

Descripción

La idea es utilizar una placa ESP32 con ESPHome conectado a Home Assistant, la placa acciona un módulo relé que activa y desactiva la caldera desde la interfaz de termostato de Home Assistant.

Componentes

Vamos primero a listar todos los componentes necesarios.

Raspberry Pi 4

Mi instalación de Home Assistant es en una raspberry, pero cualquier instalación serviría, siempre y cuando tenga Bluetooth. Los termómetros de Xiaomi que utilizo necesitan bluetooth. Se pueden encontrar instrucciones en la web de Home Assistant para la instalación. (Esto probablemente sería lo más complicado de conseguir, hay falta de stock, aunque se supone debería volver en 2023)

ESP32

Cualquier ESP32 debería funcionar. Se puede comprobar en la página de ESPHome Los míos son de AliExpress. También se puede encontrar en amazon: https://amzn.to/3jewNUA

Módulo Relé

La mayoría funciona igual, los míos son de Amazon https://amzn.to/3HEgEBy

Termómetro

Este componente es el que usaremos para basar el control de temperatura. Tenía unos de Xiaomi que se pueden conectar a Home Assistant con este tutorial. Concretamente, el modelo es: https://es.aliexpress.com/item/1005005054100936.html

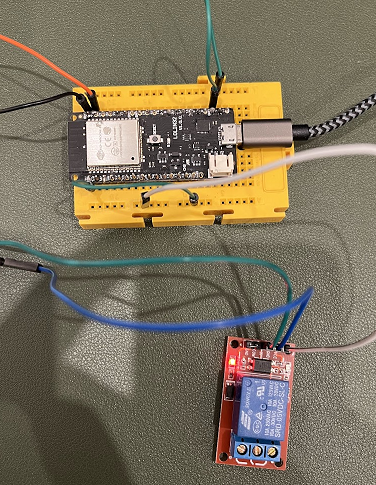

Circuito ESP32 y Relé

El circuito de control es muy sencillo, necesitaremos soldar 3 pines de la placa ESP32 al módulo Relé, uno de GPIO, cualquiera sirve, yo usé TODO. Luego necesitaremos un GND y un VCC. Suele haber un par de cada en las placas. Los de GND y VCC van al GND y VCC del módulo relé, y el GPIO al Signal line (en el caso del módulo del link mencionado anteriormente).

Cualquier tutorial de “controlar un rele con ESP32” mostrará una configuración similar, simplemente cambiarán que pines se usa.

Configuración del ESP32

Necesitaremos subir la configuración al módulo ESP32 para esto necesitaremos tener ESPHome en Home Assistant. Esto hay otras personas que lo explican mucho mejor que yo, por ejemplo aquí. El código que vamos a subir a la placa es el siguiente:

esphome:

name: boiler-control

esp32:

board: lolin32

framework:

type: arduino

# Enable logging

logger:

# Enable Home Assistant API

api:

encryption:

key: "ZQZysfdsfdsfsvc2+3rEbHA84g7uj9g="

ota:

password: "93eb9a3fdsfdsfds684d23fadee4a"

wifi:

ssid: !secret wifi_ssid

password: !secret wifi_password

# Enable fallback hotspot (captive portal) in case wifi connection fails

ap:

ssid: "Boiler-Control Fallback Hotspot"

password: "dHFVhxEDkT0I"

captive_portal:

switch:

- platform: gpio

pin: 1

name: smartthermostatrelay

Casi todo será autogenerado durante la instalación del ESPHome en la placa, por lo que no tenemos que cambiar nada de eso, solo tendremos que añadir la parte que configura nuestro interruptor:

switch:

- platform: gpio

pin: 1

name: smartthermostatrelay

Esto hará que podamos referirnos a nuestra caldera como smartthermostatrelay dentro de Home Assistant

Configuracion en Home Assistant

Una vez tenemos el termómetro configurado y nuestro interruptor con ESP32 también listo, solo necesitamos crear el componente de Termostato con el que podremos establecer la temperatura que queremos. Home Assistant hará el resto.

Esta sección se debe añadir al fichero /config/configuration.yaml que se puede acceder de muchas maneras, yo lo modifiqué con el ADDON File Editor

climate:

- platform: generic_thermostat

name: Termostato

heater: switch.smartthermostatrelay

target_sensor: sensor.termostato_temperature

Las dos variables importantes: heater es el relé que hemos instalado, debe coincidir con el nombre que le hayamos dado. Y luego, target_sensor, que sera la temperatura de referencia, este sensor saldrá del termómetro que se haya configurado.

Despues de un reinicio de Home Assistant el termostato debería ya ser visible y poder ajustarse a la temperatura deseada.

Para poder acceder desde fuera de casa y poder encender y apagar la calefacción antes de llegar a casa, yo utilizo el servicio de cloud del mismo home assistant, pero también es posible configurarlo manualmente, según algunos tutoriales.

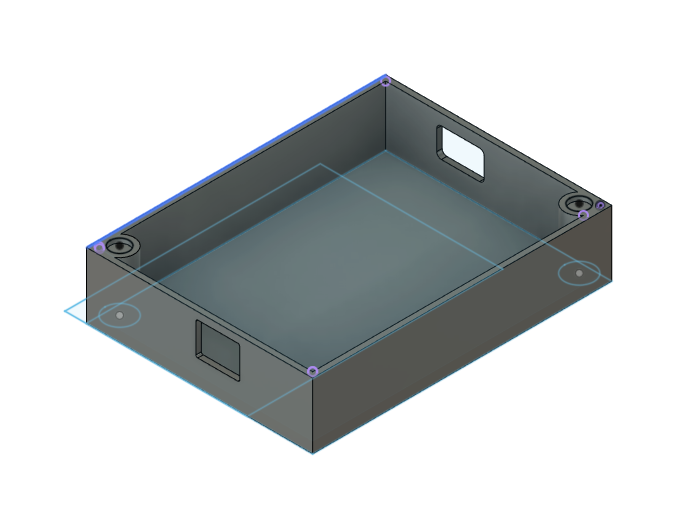

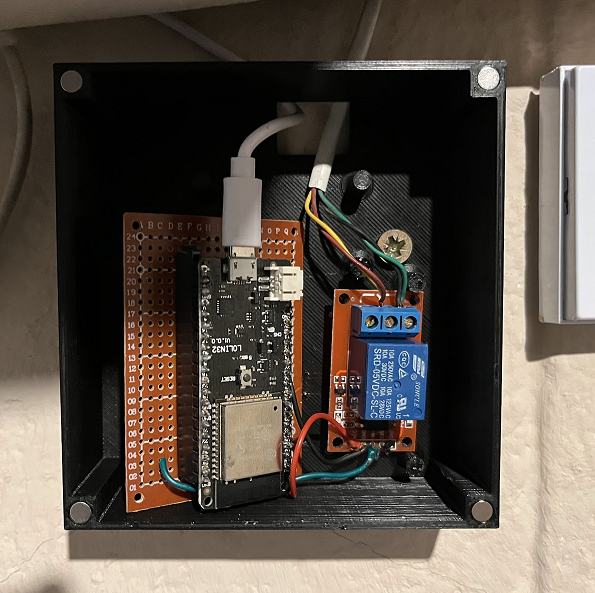

Caja impresa en 3D

Para proteger los componentes y poner todo debajo de la caldera, diseñé una caja, con los agujeros para atornillarla que coincidían con los que ya habían en la pared con el otro termostato, y para el cierre dejé unos pequeños huecos para pegar unos imanes y hacer un cierre magnético. Intente poner unas plataformas donde poner unos tornillos, pero no me salieron con las medidas correctas y simplemente pegue los componentes. No es ideal, pero funciona.

Todo instalado debajo de la caldera quedó así:

Siguiente pasos

Lo primero que me gustaría mejorar es las temperaturas en las habitaciones, tengo varios termómetros más instalados en diferentes habitaciones, pero de momento solo tengo uno como referencia. Una mejora sería utilizar los sensores template de HA y dependiendo del momento del día, o una escena concreta, cambiar el termómetro de referencia. También podría ser basado en la media de todos los termómetros.

Ahora, el siguiente paso, sería integrar también las maquinas de aire acondicionado al sistema. HA con ESPHome también pueden controlar dispositivos con infrarrojos, como los mandos de los aires. Integrando los aires se podrían utilizar en modo caliente cuando la temperatura baje mucho y la caldera no sea suficiente. Pero también estará listo para controlar los aires en el verano con el mismo termostato.

A bit on online multiplayer games in Godot Permalink

I wanted to try something related to programming but as different as possible as what I do as a software developer in a day to day basis, so I got back to Godot engine. I had downloaded it and tried it out for the first time during the first months of the pandemic, and did a couple of tests with some 3d models from Blender and I loved it. So this time I wanted to learn a bit how multiplayer would work in a simple 2d game. Just a couple of players and some guns.

The players should be able to see each other synchronized, be able to shoot and take damage and die. Simple enough. Also some of the tutorials I was following suggested a lobby and waiting room, so I did, because why not.

Now, a quick YouTube search took me to one excellent series on multiplayer Godot. This basically has all you need to start and also an explanation on the network concepts. I skipped things related to authentication since I’m obviously not there yet, but all the rest was perfectly applicable to my 2d fighter platformer.

It was easy enough to get tutorials on basic movement in 2D to allow my player to move around, and another one on how to create tilemaps for your world. I use some free game art from https://opengameart.org/ and move on with the multiplayer tutorials. Now, the following were my main pain points while understanding the code itself.

Interpolation

For me, the most difficult concept to grasp in the multiplayer environment was implementing interpolation and how to have players play in the past, so they have enough information to interpolate movement between the not so recent past and the recent past. This concept is much better explain in the Game Development Center’s series episode 12.

Here there’s another document that can help in understanding the justification behind this solution: entity-interpolation

Clock Synchronization

Another issue is the fact that we need to account for the latency generated in the server-client communication, when not working you may encounter issues like this one:

Multiplayer is difficult 🥹🥹🥹 #gamedev #GodotEngine pic.twitter.com/zfqINjIWvc

— Giuseppe Moretti (@gmoretti) July 17, 2022

Here clock in the bottom right instance is behind so action coming from the server does not get “reproduced” in the correct time.

Once time is compensated, you can use that same clock to account for damage and/or deaths. You send a timestamp alongside this information when informing clients. And the clients wait for their clocks to match that timestamp to execute the actions.

This is the code in the client. We don’t react to the damage until the local clock is equal to the damage time.

# keys (damage variable) in damage_dict are timestamps

func ReceiveDamage():

for damage in damage_dict.keys():

if damage <= Server.client_clock:

#Reacting to the damage

current_hp = damage_dict[damage]["Health"]

damage_dict.erase(damage)

Now, once latency and clock are managed, things start to look much better.

Some progress on the latency and damage

— Giuseppe Moretti (@gmoretti) July 18, 2022

🙃#GodotEngine pic.twitter.com/gSpsrwXFMz

Notes on Godot

What I liked the most was the speed of iteration, Godot is very lightweight and launching a scene is super quick. Since I did not know about any engines, I first tried Unity and I felt like everything was too big, at least for what I needed. I had to get use to how a tiling system works and although it’s not specific from Godot the features for tiling were quite easy to work with once you know how of course.

On the other hand, there is a lot of use of String to connect things, string paths to refer to nodes and to signal callbacks. This is cool at the beginning, but when refactoring it makes it a little more cumbersome.

I was interesting to notice how the whole communication was simpler than it felt it would be. The way one server instance process handles one game. If you want more rooms, you need more instances of the server game process. After this principle, you can then use all sort of techniques to spawn your server processes, like basic scripting or containerized solutions. But the complexity at the bottom remains the same. One instance of the server handles one game.

What have I learned

multiplayer from 2 different machines, server handling collisions and interpolation between states. I do have some syncing issues after 10+min because of drfting clock tho #GodotEngine #gamedev 🤓 thanks @GamedevStefan for the amazin tutorials 👏👏 pic.twitter.com/zKdkcuIR4j

— Giuseppe Moretti (@gmoretti) July 31, 2022

Whats next

The most difficult part for me was the clock and interpolation part. i would like to create a new project and just code again the multiplayer sync part, understanding how to improve it and why I am getting sync issues after some time connected into a game.

Also, I would like to maybe try a local co-op style focusing in just gameplay and not networking. The idea would be to tryout different mechanics and test them in different test project, as assignments to grasp concepts.

Links and thanks!

Links to the github repos with the server and client projects.

https://github.com/gmoretti/godot-online-multiplayer-fighter-client

https://github.com/gmoretti/godot-online-multiplayer-fighter-server

The amazing series where all this is actually explained , from Game Development Center https://www.youtube.com/watch?v=lnFN6YabFKg&list=PLZ-54sd-DMAKU8Neo5KsVmq8KtoDkfi4s

The amazing series where all this is actually explained , from Game Development Center https://www.youtube.com/watch?v=lnFN6YabFKg&list=PLZ-54sd-DMAKU8Neo5KsVmq8KtoDkfi4s

Another Multiplayer series for Godot from Rayuse, from which a learned how to create the lobby and waiting room https://www.youtube.com/watch?v=MGo06JvYkrA&list=PLRe0l8OGr7rcFTsWm3xyfCOP4NpH72vB1

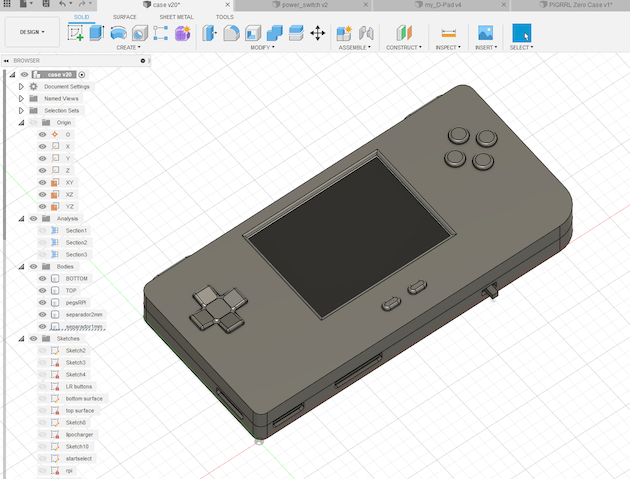

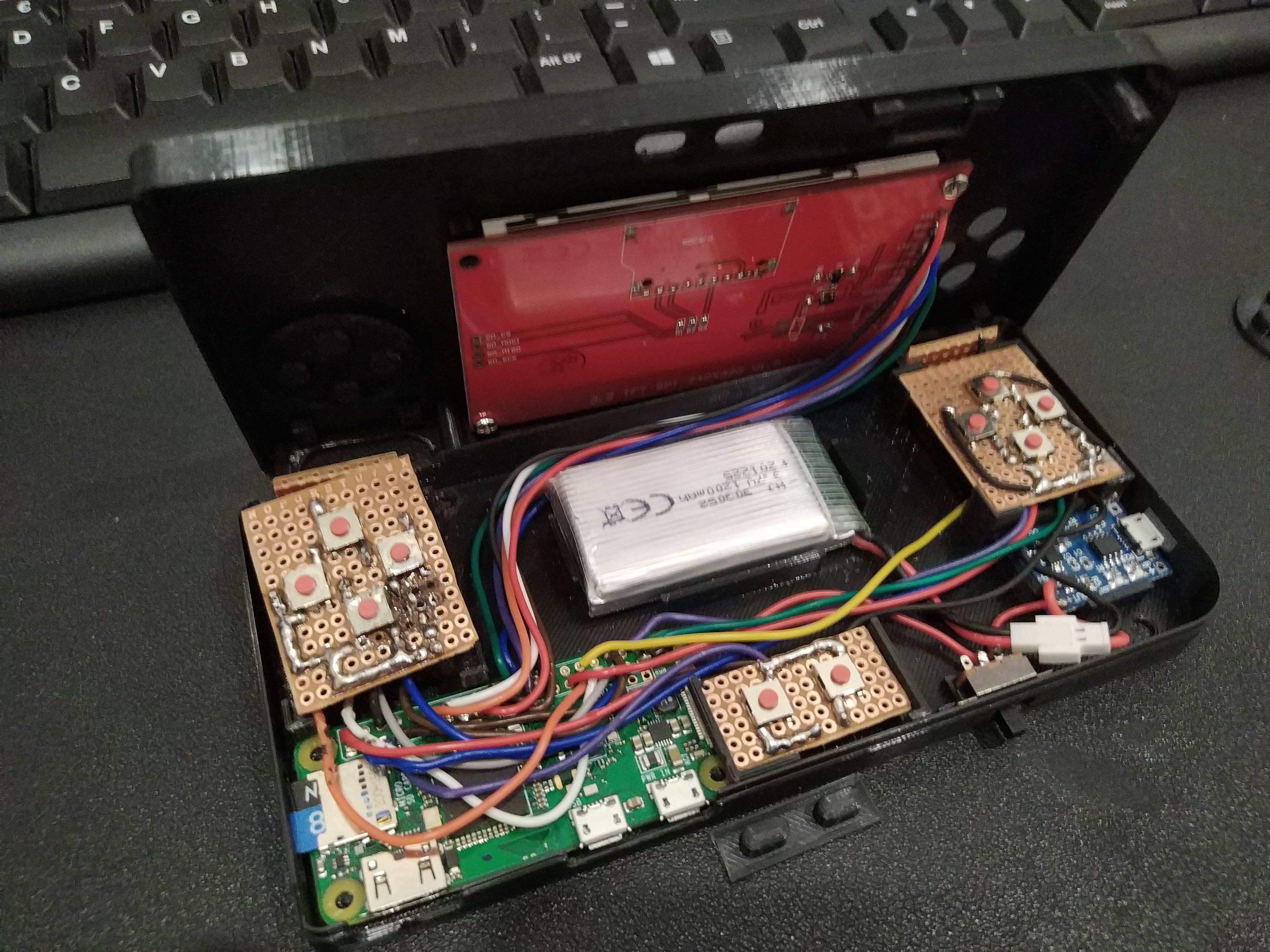

Building my own retro game handheld Permalink

So, after going through the thingiverse library looking for cool prints, I thought one of the coolest was Adafruit’s Pi GRRL Zero. I liked the shape, it was compact but big enough to have a confortable grip.

This design was pretty cool but I thought the screen was a little too small, and since the Pi GRRL had a whole guide on how to build the handheld, some assembling videos, and even some software and scripts, I decided to remix the case. It was an excellent reference taking into account my rough experience with electronics.

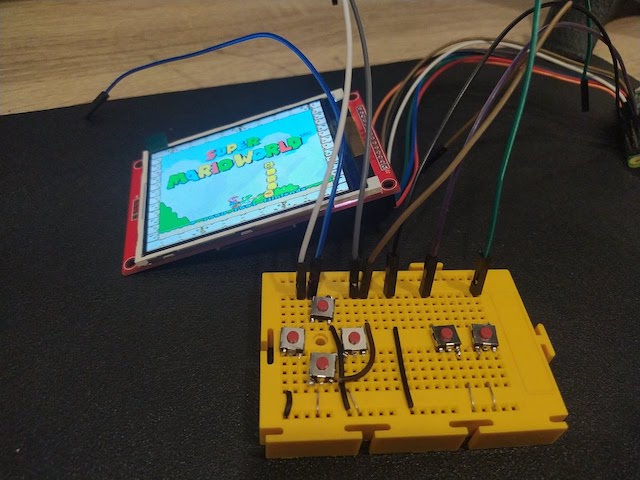

Prototyping

I started by assembing the components in a protoboard, connecting the raspberry and the screen and check configurations. I alse did a fresh installation of RetroPie in my RpiZero.

Screen

The trickiest past here was to wire properly depending on the LCD you have and to compile the right driver. My screen was a 3.5’ LCD like this one. I took the source code for this drivers and change a little bit the intructions for compilation to fit this particular screen. I followed the instructions from this forum just changing the las compilation line to be:

## set driver options

cmake -DSPI_BUS_CLOCK_DIVISOR=6 -DGPIO_TFT_DATA_CONTROL=25 -DGPIO_TFT_RESET_PIN=27 -DGPIO_TFT_BACKLIGHT=18 -DSTATISTICS=0 -DBACKLIGHT_CONTROL=ON -DUSE_DMA_TRANSFERS=OFF -DILI9341=ON

This, plus a “ili9341 wiring pi zero” search on google images gave me a hint on how to wire the screen to the PiZero.

Buttons

For the buttons once again Adafruit and the PiGRRL to the rescue. They have a super easy to install script to configure the GPIO pins to work as buttons, through a configuration file I was able to identify which PIN was used for what button. Just following the steps from Adafruit, as a reminder, PIN and GPIO ports are different things, so it’s nice to have the schematics for the Pi on hand. I left the config file as follow:

# Sample configuration file for retrogame.

# Really minimal syntax, typically two elements per line w/space delimiter:

# 1) a key name (from keyTable.h; shortened from /usr/include/linux/input.h).

# 2) a GPIO pin number; when grounded, will simulate corresponding keypress.

# Uses Broadcom pin numbers for GPIO.

# If first element is GND, the corresponding pin (or pins, multiple can be

# given) is a LOW-level output; an extra ground pin for connecting buttons.

# A '#' character indicates a comment to end-of-line.

# File can be edited "live," no need to restart retrogame!

# Here's a pin configuration for the PiGRRL Zero project:

LEFT # Joypad left

RIGHT 19 # Joypad right

DOWN 16 # Joypad down

UP 26 # Joypad up

Z 20 # 'A' button

X 21 # 'B' button

#A 16 # 'X' button

#S 19 # 'Y' button

Q 20 # Left shoulder button

W 21 # Right shoulder button

ESC 17 # Exit ROM; PiTFT Button 1

LEFTCTRL 22 # 'Select' button; PiTFT Button 2

ENTER 23 # 'Start' button; PiTFT Button 3

4 27 # PiTFT Button 4

# For configurations with few buttons (e.g. Cupcade), a key can be followed

# by multiple pin numbers. When those pins are all held for a few seconds,

# this will generate the corresponding keypress (e.g. ESC to exit ROM).

# Only ONE such combo is supported within the file though; later entries

# will override earlier.

You can finde the button installer here: https://learn.adafruit.com/retro-gaming-with-raspberry-pi/adding-controls-software

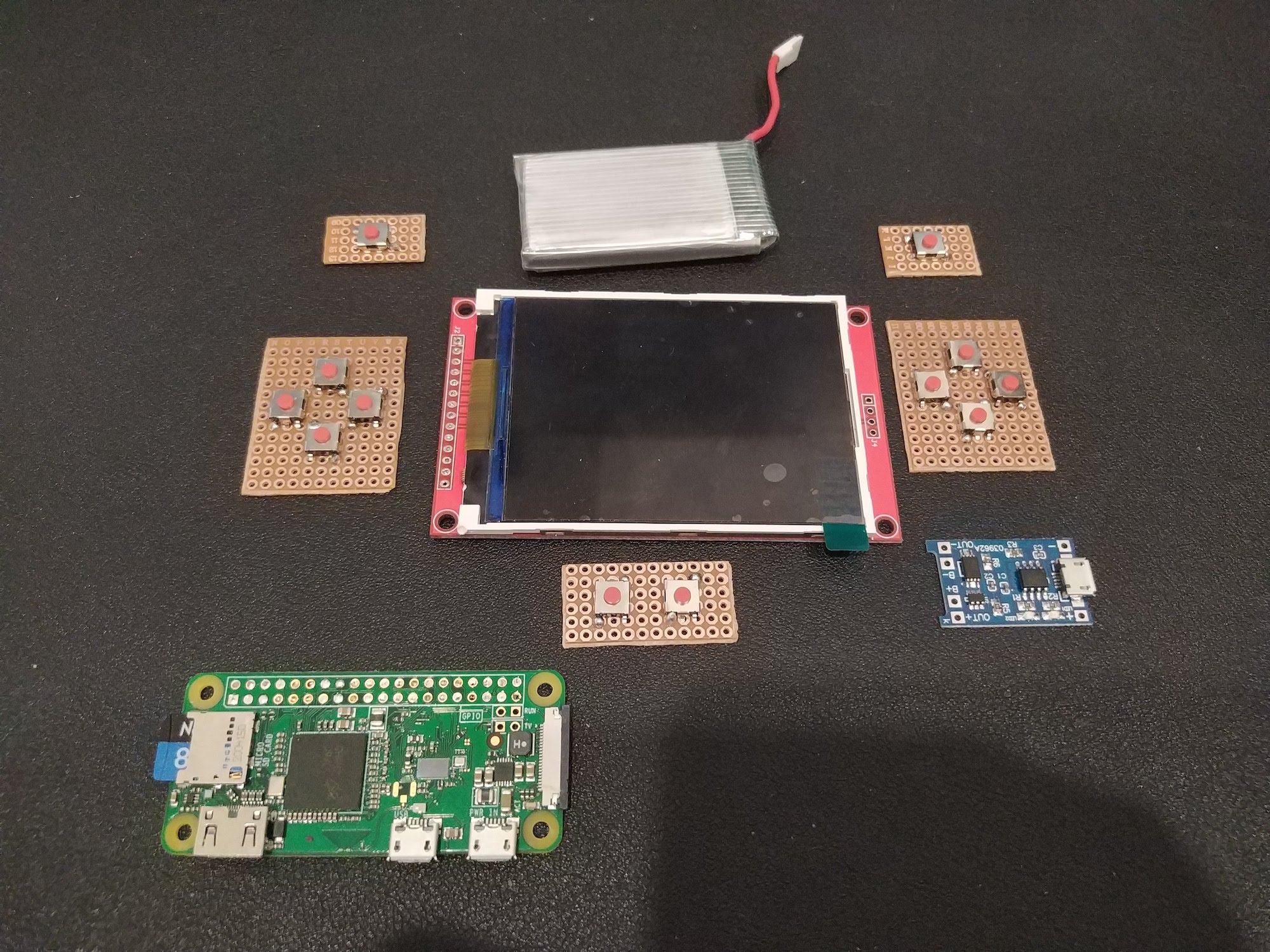

Battery

For the batery I used a LiPo from AliExpress and also charge controller from the same shop. These were really easy to use and cheap. I have used them in many projects already and worked like a chard, So I just had to fit the controller and the baterry properly in the case.

Example of the battery type: https://es.aliexpress.com/item/4000369075771.html And the USB charge controller: https://es.aliexpress.com/item/4000956074114.html

Case design

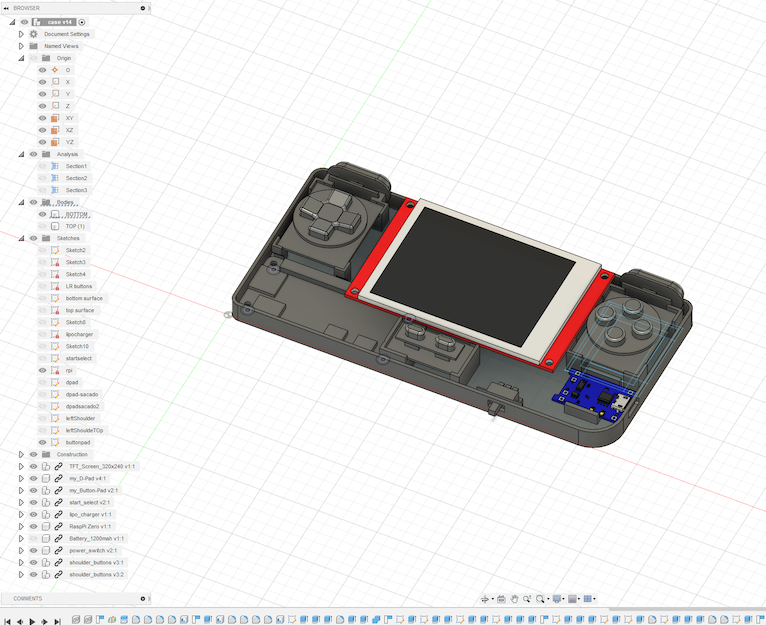

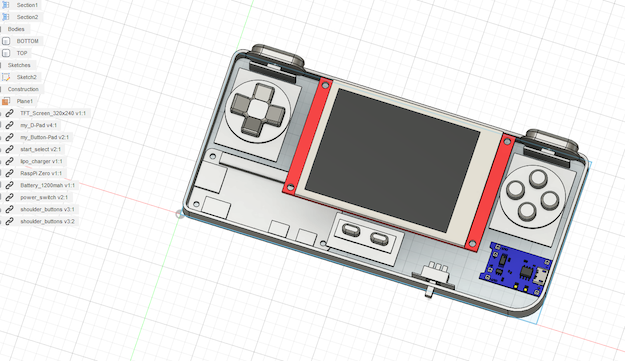

Components

The approach for the case was first to lay all the components I was going to use and model them in the Fusion 360 to have them as reference for the design. I also used GrabCAD to get premodeled components (the Pi the screen, etc)

Model

I followed Noe's PiGRRL tutorial https://www.youtube.com/watch?v=uVGKswaW5OQ but changed the parts that I was interested on changing: Screen size and fitting the components I had. My buttons were solded into proto PCBs that I cut myself. I took several prints to get the right size, and the case has a fundamental flaw. I did not think of the screws, and at the end was only possible to fit two in weird positions, which means the cases does not close correctly. Also, I really wanted the Pi ports available, and that could only be possible by putting it in the corner, where the screw was supposed to go.

An improvement I will consider would be making a snapping case. I did some test but my skills in Fusion 360 were not fit for this task.

All the STL files can be found in Thingiverse: https://www.thingiverse.com/thing:5385477

Assembling

Possibly the most painful part of this project was soldering the cables to the PI, soldering the rest of the cables and then fitting all those cables. Of course designing and using a prefab PCB would have been much easier, but, what’s the fun in that. For closing the case with screen, my printer does not have enough resolution for designing the threads, so Google to the rescue. By heating my M3 screws and then slowly screw them into the plastic, I was able to make them. Now it’s not by all means strong and durable, I had to be very careful when taking them out, not to break them, but it did the trick.

Another assembling issue was that the crews for the TFT were too long, so I had to print some spacers so make them fit.

Play!

Of course as the PiGRRL I installed RetrPie image and a couple of games to test. This was actually the easy part, In the same PiGRRL guide I followed some tips to increase performance. Most games are playable but in some cases I did notice some performance issues. Hopefully in the next iteration of the PiZero I’ll have enough horse power for SNES and Games Boy which at the end of the day are the ones I enjoy the most.

You can see the Pi in action

New case V2 red andsome Zelda testing #3Dprinting #RETROGAMING

— Giuseppe Moretti (@gmoretti) April 3, 2021

:) pic.twitter.com/7AvXvOvjSE

A lot can be improved in this design and the project, but for a first design and prototype I think is more that usable. I hope I return to it sometime in the future.

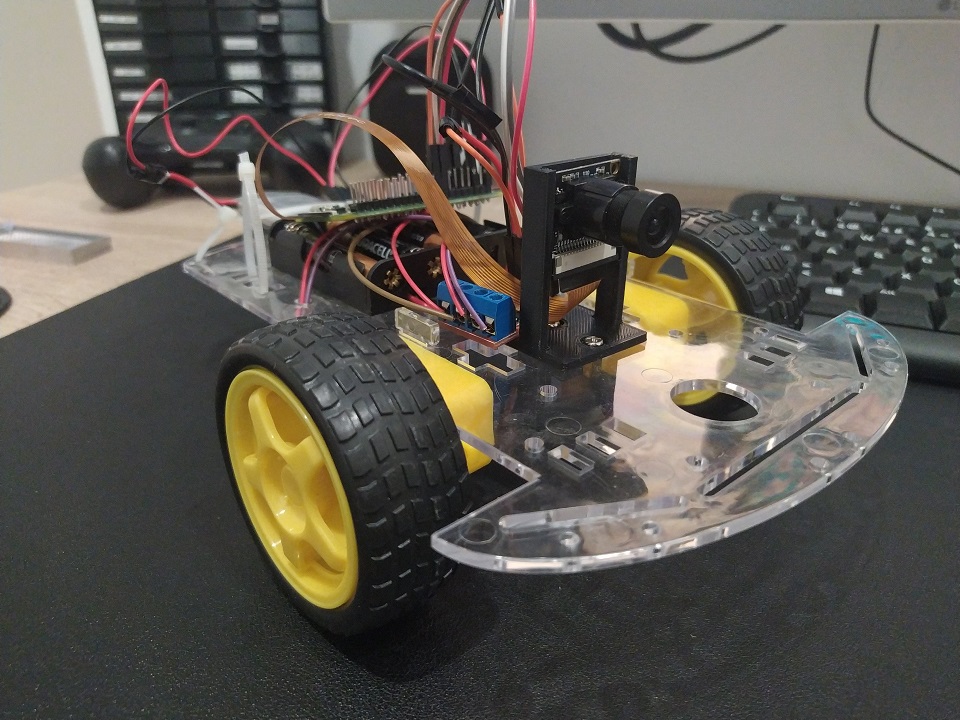

FPV robot with Raspberry Pi Permalink

Not so long ago I bought one of those Amazon 2WD robot kits with a frame and 2 motors. These motors work straightforward with the Raspberry Pi’s Motor API provided that you have a motor control Bridge like this one interfacing with them.

So I thought well, just add a camera and you have yourself and online FPV robot.

Parts

Interfacing with the motors

I found a way to connect the motors and and very nice example on how to interface with them in the official Raspberry project page, here.. Also, it show me how the H bridge provides power and direction to the motors.

WebSockets Server

Now with that example in python. I wanted to try a server with Websockets to take adventage of the speed of a connection that is always open.

I took the example and created an event that can be triggered by a message from our client app (we will cover it later) and accepts values for each motor, being 1 full power for the motor and -1 full backwards. With that you can get all 5 possible movements.

from flask import Flask, escape, request, render_template, send_from_directory

from flask_socketio import SocketIO

from gpiozero import Motor

import time

motor1 = Motor(27, 17) # Here depending on your configuration. I use these GPIO any would do

motor2 = Motor(24, 23)

app = Flask(__name__)

app.config['SECRET_KEY'] = 'secret!'

socketio = SocketIO(app, cors_allowed_origins='*')

@socketio.on('move_motors')

def handle_message(data):

left = data['data']['left']

right = data['data']['right']

print('left: ' + str(left))

print('right: ' + str(right))

if left > 0:

motor1.forward(left)

elif left < 0:

motor1.backward(abs(left))

else:

motor1.stop()

if right > 0:

motor2.forward(right)

elif right < 0:

motor2.backward(abs(right))

else:

motor2.stop()

if __name__ == '__main__':

socketio.run(app, host='0.0.0.0', port=49152)

#running in localhost:5000 default

I ran this as a server for controlling the motors. SocketIO is one of the defacto libraries for interfacing with WebSockets. Very easy to use and has also a port to use it in python. I basically followed the guide in the official website to get things up an running.

USB Charging

The little module TP4056 boost circuit can charge a LiPo battery or serve as the power input for the py or both, withouth worrying about harming the LiPo. The cir circuits prevents overcharge and overdischarge. The module itself is pretty self exaplinatory. It has + and - for battery and + and - for load, the latter we connect to the Pi on pins 2 (5V) and 6 (GND).

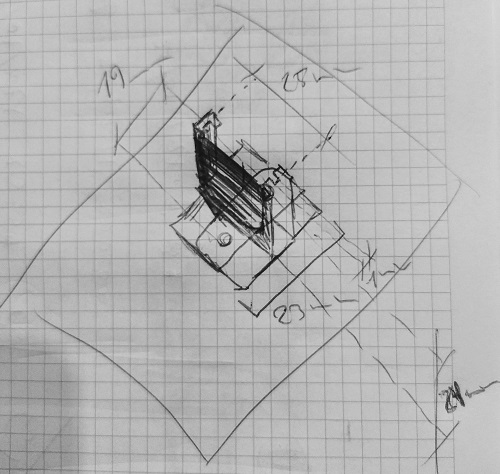

3D printed camera stand

The camera module did not come with any support or case or anything, and although the size is pretty similar to the official raspberry camera module, I wanted to give it a go, design and print a simple stand for my tests. I sketched up a very simple support that I could screw into the plastic frame. You can find it here in thingiverse

Video stream

One of the main parts it’s the video feed. There were a few options out there. The simplest one were using single frames and feeding a video html tag. Those test prove to be very slow o nthe Pi giving me framerates of 15FPS or less on the Pi Zero. The whole idea is that the I could have a latency that could allow me to control the robot from outside my network. An acceptable amount of latency.

I also want it to give it a shoot to WebRTC the new thing in browser coms. I tried first a WebRTC module for uv4l. It worked but it was kind of unstable, probably misconfiguration of some sort. Then I realised the project was not open source, so I couldn’t look inside. I then found RWS which had super low latency and worked out of the box, almost no config.

Motor control JS client

RWS also comes with an embedded web server where I could modify the index demo file with the motor control client we needed to connect to Flask Socket IO server we just discuss. This was also fairly simple. Include SocketIO javascript library, and write a javascript script that detects key presses and sends them to the Flask server where they get transfer to the Motor.

<script type="text/javascript" charset="utf-8">

var socket = io('ws://your-flask-socket-io-server:49152');

socket.on('connect', async function() {

forward = {left: 1, right: 1}

backwards = {left: -1, right: -1}

left = {left: 1, right: -1}

right = {left: -1, right: 1}

stop_motors = {left: 0, right: 0}

document.addEventListener('keydown', (event) => {

if (event.repeat) return;

const keyName = event.key;

if (keyName === 'w') {

console.log('going forward');

socket.emit('move_motors', {data: forward});

} else if (keyName === 's') {

console.log('going backwards');

socket.emit('move_motors', {data: backwards});

} else if (keyName === 'a') {

console.log('going left');

socket.emit('move_motors', {data: left});

} else if (keyName === 'd') {

console.log('going right');

socket.emit('move_motors', {data: right});

}

});

document.addEventListener('keyup', (event) => {

const keyName = event.key;

console.log('keyup event\n\n' + 'key: ' + keyName);

socket.emit('move_motors', {data: stop_motors});

});

});

</script>

Notice that the emit points to the channel we defined before. The default page for the video feed is already super nice so no more changes needed for this experiment.

External Access

Now to be able to access everything from the outside world ports needed to be open and redirected.

WARNING: Everything exposed in here is not secured and opening ports in your router could be dangerous

Since I have a dynamic IP I had to use a dynamic dns provider (I used NoIP) to make it easily accesible from the outside The ports I needed to redirect were 8889 (webRTC RWS server) and 49152 (flask motor control over websocket)

Installation Steps!

1. Activate Camera Interface

First we need to activate the camera module. We can do it graphically with the config wizard.

sudo raspi-config

Hit Interface Option then Camara and YES

2. Downalod and install RWS somewhere accessible

I have a Pi Zero but in the url you can find other builds

wget https://github.com/kclyu/rpi-webrtc-streamer-deb/raw/master/rws_0.74.1_RaspZeroW_armhf.deb

3. Update system, install dependencies and the RWS websocket video server

sudo apt update

sudo apt full-upgrade

sudo apt install libavahi-client3

sudo apt install python-rpi.gpio python3-rpi.gpio

sudo dpkg -i rws_0.74.1_RaspZeroW_armhf.deb

4. Tweaking the default webserver page

We change it to connect without https and also add the connection to connect to the Flask SocketIO server that controls the robot.

[insert the changes in here]

In the repo I have the flask server code I also put a modified copy of the rws index page.

cd /opt/rws/web-root #the folder np2 contains the client

sudo cp -r ~/rpi-car/statics/rws-modded-default/. .

restart the video service:

sudo systemctl restart rws

Changes should now be visible in http://your-private-ip-address:8889/np2/ and you should be able to see the video feed.

5. Install socketio for python and eventlet to support concurrency

pip3 install python-socketio

pip3 install eventlet

6. Run motor control server

From the rpi-car folder run the flask server with python3

python3 flask-server.py &

7. Open ports in router

Open ports 8889 and 49152 and redirect to the Pi Zero address to make them accessible from the outside: be careful, nothing is secured with SSL, so its not safe. Use it, test it, and then find a way to secure it.

At this point and if you also configured a Dynamic dns service you should be able to accessthis ports with the dyn dns URL.

How does it look?!

Here’s a quick demo were you can more and less the latency and the control.

Next steps!

This is only a tiny proof of concept, there’s so many ideas to improve it, but I’d like to start with a few:

Working on my personal website: Part 1 Permalink

So it’s been a while since I gave some love to my website. I started by returning my domain to my original provider (It’s been 1&1 for ages, no time to change it) to be able to host the website there (hosting is free and already attached to the domain morettigiuseppe.com so, easier to leave it like that).

This worked ok and I took adventage of the opportunity to put a custom domain to my blog with a CNAME record into the blog subdomain.

Cool, so before I start doing any redesign I would like to automate the deployment on push to the master branch of my GitHub project. This is now extremely easy with GitHub Actions.

My domain/hosting can be accessed to deploy files via SFTP, so all I need is a github action that does this. My workflow looks like this now:

on: push

name: Deploy SFTP

jobs:

FTP-Deploy-Action:

name: FTP-Deploy-Action

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@master

- name: FTP-Deploy-Action

uses: SamKirkland/FTP-Deploy-Action@2.0.0

env:

FTP_SERVER: $

FTP_USERNAME: $

FTP_PASSWORD: $

METHOD: sftp

PORT: 22

ARGS: --delete --exclude-glob=logs/*

Configured a couple of GitHub secrets on settings for login and all set!.

more tweaks

I still would like to have the same minimalistic approach with just a few improvements.

favicon, change font type and links

There were simple details that I had not done in the rush of creatining it the first time. I had no favicon so I used a generator to get one, simple.

Another thing I wanted to get rid of were the image links, I wanted to give a more geeky look so changing to a monospace font did the trick, also, it looks more like the dev.to’s style which is really cool. Also, I modified some css classes to display properly the links and use the whole screen.

Since I was here, I also changed the image.

adapt de mobile view

So I use media queries as explained in this post the idea is to have a the basic mobile layout and then use the media queries to identify the device and put the exceptions, in my case was pretty simple. I wanted to have, in case of mobile, bigger image, bigger text and the list of links should be vertical instead. So the effect is that in mobile it looks more like a card and the links are easier to click.

<link rel="stylesheet" type="text/css" href="css/style.css" media="screen, handheld" />

<link rel="stylesheet" type="text/css" href="css/style-desktop.css" media="screen and (min-width: 70em)" />

The second link with the desktop css rules will be called only if the screen is bigger than 70em. Keep in mind that they are not exclusive so in case the screen is big both files will be called and like always in css, last rule applies so the desktop file will replace any existen rules, This is why in this file si where we put only the things we want to override.

@media screen and (min-width: 70.5em) {

#mainTitle{

font-size: 2em;

}

.subtitle{

font-size: 1.5em;

}

#profile{

width: 18em

}

.socialNetworkLink{

display: inline;

font-size: 1.7em;

}

.love{

font-size: 1.0em;

}

}

Then the overrides are only changing sizes and the way the links are displayed.

I also changed the footer like the first one explained in this link to get the effect of the footer being always at the bottom but if the website scrolls, that the footer scrolls too. This is very well explained on the link

[Añadir foto antes despues usango el git para sacar las fotos] [añadir el link al repo, quzas al dif entre los dos estados]

before and after

next steps

I would like to have an area to list tiny project or things that I have made. Like a portfolio of some sort. Also I think it coould be cool to have a selector in the corner that could switch between development stuff and music stuff. Maybe it could change to negative colors (black background) and change the links and the projects.

Back to childhood with interior design Permalink

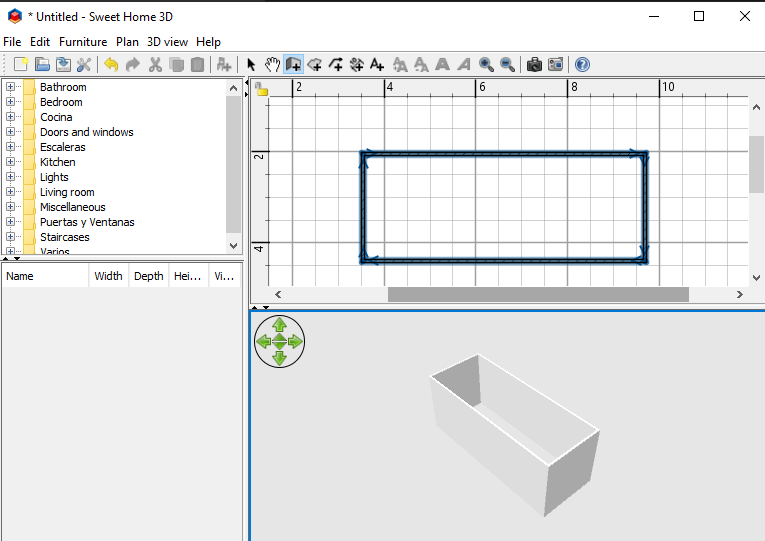

When I was a kid, I used to play Sims only in building mode. I thought THAT was the coolest part. Just go and build a cool house. Later on, I discover an interior design software (no idea which one) that probably was too complicated at the time (I spend a lot of time with any 3D related program).

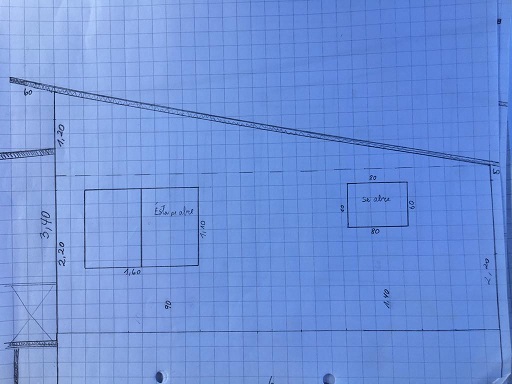

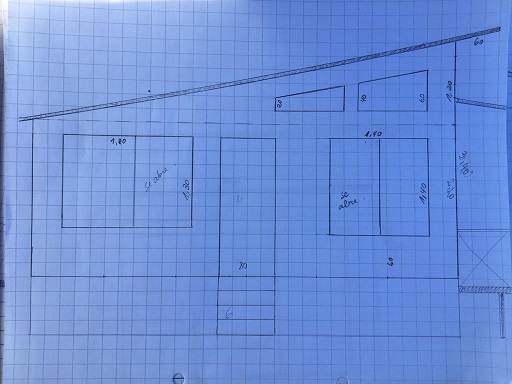

Recently my parents gave me some plans for a cabin they were building.

So I thought that it would be cool to have a 3D model of what the cabin would look like once done, also because I remembered it being a lot of fun.

So I did a simple Google search on free house design software and Sweet Home 3D came up as the most popular one. Pretty straightforward, you have a plan view where you can add walls and everything you need and a 3D view to see how you are doing.

Next step was simply tried to replicate the plans I had into the software as accurate as possible and see what it looked like. The final plans on Sweet Home 3d Looked like this.

There are some parts of the original plan that I couldn’t get done in Sweet Home.

The render of the final result looked like this.

Final toughts

Although it was a lot of fun, if I had to make another one I would either try the paid version of Sweet Home 3D or try other options like Virtual Architect.

Another option I saw wondering on Youtube was the possibility to export your modeled house to Blender, and work from that point on. It looked very cool but Blender is no joke. As next step I would like to try this.

One of the problems with Sweet Home 3D was the surroundings, terrain, etc. Another video on youtube showed a Blender plugin to grab elevation telemetry to model real terrain into Blender. This plus importing the designed house would be very cool to render a full view of the house plus surroundings.

Links

Image manipulation Slack slash command with AWS Permalink

After being able to successfully send messages back to Slack, another chance to itengrate Slack with AWS came up. The idea was simple, I had an image to which I wanted to add arbitrary text. I envisioned this as creating a call from Slack with Slack Slash Commands and simply answer it with AWS Lambda… but….NO.

The idea

To be able to achieve this I ended up having to use quite a few pieces from the AWS stack:

Let’s tackle these steps one by one.

Slack slash command

This is the easy part. All we have to do is setup an Slack App and then setup an slack command.

In the configuration of the Slack command a URL has to be defined, which is were the Slack command would point at. This call, as specified in Slack Slash Commands documentation sends a application/x-www-form-urlencoded content-type header and a body with, among other things, the slash command’s payload which is text we will be printing in out image. The header is relevant as we will see when setting up our Lambda function.

There’s another important constraint regarding slash commands. This is, the answer to the request made by slack cannot be more than 3000ms. We will later see that the image processing took longer sometimes times. For these cases, Slack sends a parameter response_url where the final response should be sended up to 30 minutes later.

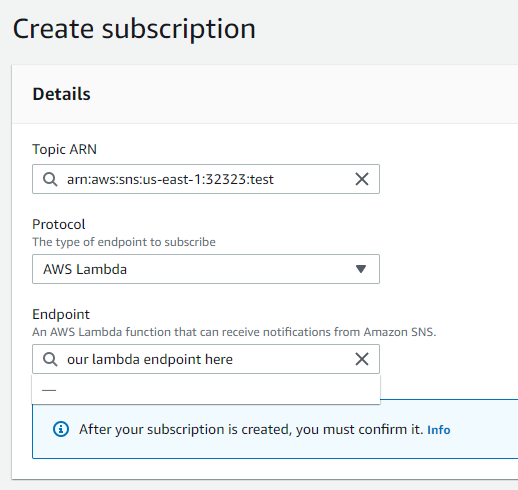

AWS Services

API Gateway + Lambda + SNS + S3

To be able to offer a response and then took our time to process the image and later respond with it, we will need an API Gateway endpoint pointing to a first LAMBDA, we will call this lambda proxyLambda.

To handle long request asynchronously we will make use of AWS SNS, this is a messaging platform from Amazon and it will let us use the first proxyLambda to publish a message into AWS SNS and have antoher lambda subcribed to SNS for reading and processing the requests, we will call this Lambda subscriberLambda.

Now, once our subscriberLambda has finish it needs to store the new image in an S3 bucket, since Slack is expecting just a text message with a link to an image to desplay.

With all this the flow should look something like this:

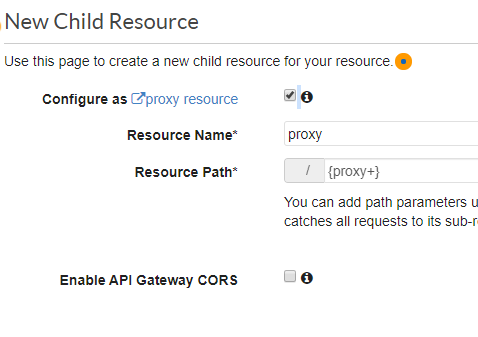

API Gateway configuration

The idea for the Gateway configuration is to get Slack request proxied straight to the lambda. The reason behind this is what we previusoly said about the Slack slash command request type. The standard configuration to attach an AWS API Gateway to a lambda funtion is via a method (say GET or POST), the gateway expects a payload to be in json, if not it crashes and the request never gets to the lambda. I was able to solve this issue by usin the proxy configuration while configuring a new resource.

The configuration to sned the request to the lambda it’s also pretty straightforward.

![proxy resource 2]/(assets/images/slash-comm-2.PNG “proxy resource 2”)

Proxy Lambda function

Now once we have our requests proxied to our lambda, we will use it to handle it and push it to our SNS channel. Doing it like this we can respond back to Slack very fast and handle the actual request later. The lambda function would look like this.

console.log("Loading function");

var AWS = require("aws-sdk");

exports.handler = function(event, context, callback) {

var eventText = JSON.stringify(event.body, null, 2);

console.log("Received event:", eventText);

var sns = new AWS.SNS();

var params = {

Message: eventText,

Subject: "Test SNS From Lambda",

TopicArn: "arn:aws:sns:us-east-1:333333:your-SNS-topic"

};

sns.publish(params, context.done);

callback(null, {

statusCode: 200,

body: "temporal response to Slack...",

headers: {'Content-Type': 'application/json'}

});

};

This is actually all we need to forward the request to SNS. The important part here is the Topic ARN, this gets created when we create our Messaging channel.

Subscriber Lambda

Here is the lambda that would do the actual work, this functions would be listening for messages in the SNS channel and will act accordingly when a new one comes. Lets see how the main part looks like. Bear in mind, there’s a lot happening in here: Reading SNS message and parsing the request parameters, new modified image generation, storing the new image in an S3 bucket and finally sending the link and response back to Slack.

const engine = require('./ImageGenerator'); // This is a separate file with the image processing code

var uuid = require("uuid"); // Id generator for the new images

const fs = require('fs');

const AWS = require('aws-sdk');

var request = require('request');

var s3 = new AWS.S3();

exports.handler = async function(event, context, callback) {

// This section reads the SNS message and parses the request parameters

var message = event.Records[0].Sns.Message;

let buff = Buffer.from(message, 'base64');

let text = buff.toString('ascii');

let rawParams = text.split('&');

let params = new Object();

for(var param of rawParams) {

let paramSplitted = param.split('=');

params[paramSplitted[0]] = paramSplitted[1];

}

// Now we will use some methods from an imported file to process and add the requested text to the image.

// For some reason the text from the request puts '+' instead of spaces so we get rid of those

console.log(params);

let newImage = await engine.generate(decodeURIComponent(params.text).replace(/\+/g,' '));

// Now we send the request to S3 to save the newly creted image; now here we wait for the response to

// be able to generate the slack message with the new url for the file.

var paramsS3 = {

"Body": newImage,

"Bucket": "YOU-BUCKET-NAME",

"Key": uuid.v4() + '.jpg',

"ACL": "public-read",

"ContentType": "image/jpg"

};

s3.upload(paramsS3, async function(err, data){

if(err) {

callback(err, null);

} else {

console.log(params.response_url);

await sendSlack(data.Location, decodeURIComponent(params.response_url));

};

});

// Lambda response is not really relevant, since our objective of sending the slack message has been previously achieved

callback(null, null);

};

async function sendSlack(data, url) {

const options = {

url: url,

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': "Bearer xoxp-r46y54byeby6erny6erny6erny6ern"

},

body: JSON.stringify({

'replace_original': true,

'response_type': 'in_channel',

'text': '',

"attachments": [{

'text': '',

'image_url': data

}],

})

};

request(options, function(err, res, body) {

let json = JSON.parse(err);

console.log(json);

});

}

The full lambda with dependencies can be found in this GitHub Repository Now take in to account that your code can get a little bit to big and labmda user interface will not let you handle it from there so you will need something like this to deploy the files from you local environment.

aws lambda update-function-code --function-name your-lambda-name --zip-file fileb://the-zip-with-the-code.zip --region us-east-1

This AWS-CLI command requires you first zip your code, so a tiny script does the both could become handy at this point, and also, if the intention is to automate the whole deploy for example.

Amazon SNS configuration

Ok, Now lets configure the magic of connecting these to Lambdas, a.k.a. The message broker configuration. This is very easy when done from the user interface. We go to Amazon SNS configuration in the AWS console and crete a new Topic. Just fill the Name and Description and leave the rest for now.

Now the important part, once the Topic is created we need to create a New Subscription for this topic, and select our subscriber lambda here.

This is how we will get our lambda executed every time there’s a new request in the queue.

Final thoughts

Altought being a cool approach to test all theese technologies, i’m pretty sure i’ll would have gone with a different one when realising my image processing was taking so long for Slack, 3 secs should be enough in my opinion. In any case I have learned a lot, I hope you do too.

UPDATE

The way lambdas are executed in AWS the state of some variables can get shared across execution, in this case I think the engine for the image manipulation has state that will leak and it probably should be taken inside the actual function. More on that here:

Maintaining global state in AWS Lambda functions with Async Hooks

Any thoughts?, feel free to contact me, thank you!

Scheduled REST call with AWS Lambda and Slack feedback Permalink

The reason behind this short post came for two reasons. First, one of our recurrent tasks at work was making a call to a REST service to create a web-hook to it. Second, I’ve been playing around with Amazon Web Services for a while and thought that Lambdas were a perfect service for running simple scripts. I did some research and of course I was not the only one. Once this process is done we usually inform that it’s ready via slack message, so I also thought it could be cool to get that done too.

AWS Lambda

Amazon Lambda lets you choose the language for the functions you need, I choose Python just to test it, and because it has cool and well tested modules, as the Requests one, that we’ll be using.

Setting up a lambda function is very simple following the official documentation everything to code and test is available straight from the amazon console. So let’s see what we need to do next.

Breakdown

These are the three things we want to accomplish

Service Callout

Assuming you have already have a python script on a lambda in front of you, for calling to these services we will be using the Requests module this library will make easy to deal with all the REST operations, and it’s a good choice since it can be taken from the libraries that are provided inside a lambda by default. This rises a very important point, which is any dependencies needed, have to be manually added to the lambda, but there are libraries that are already available inside a lambdas function.

The following code imports the requests module from the available modules and does the needed call to the service. In my case in needed to be a POST call and it had to include a bearer token for authentication, so I created a couple of extra method.

import json

import datetime

from botocore.vendored import requests

api_url = 'https://someservices.com/getSomeStuff'

myToken = 'someBearerToken'

def getHeader():

return {

'Content-Type': 'application/json', 'user-agent': 'my-app/0.0.1',

'Authorization': 'Bearer {0}'.format(myToken)),

'user-agent': 'my-app/0.0.1'

}

def informPayload(region):

return {

'payload1': 'value1',

'payload2': 'value2',

'payload3': 'value3'

}

def makeCall():

headers = getHeader()

payload = informPayload()

response = requests.post(api_url, headers=headers, json=payload)

#I'm also interested in giving feedback when it fails (code 422) so i also added it as a returned response

if (response.status_code == 201 or response.status_code == 200 or response.status_code == 422):

return json.loads(response.content.decode('utf-8'))

else:

print(response.content)

return None

#This is the default handle for the lambda, it will be the entry poing

def lambda_handler(event, context):

response = makeCall()

if response is not None:

print("Here's your response: ")

print(response)

else:

print('[!] Request Failed')

return {

'statusCode': 200,

'body': json.dumps('Avinode Hook was executed!')

}

Feedback through Slack

Now that we have our call code, we can use the same process of calling an external service to call Slack API and send a message to our team channel with the response. To get credentials to do so, you will have to go over the steps on Slack to create a new Salck APP and install it in your team’s Slack workspace. You can follow the official slack docs for this. With this information we are going to modify the lambda_handler method and add a new sender method, here you have the new methods.

#Updated

def lambda_handler(event, context):

response = makeCall()

if response is not None:

slack_msg = '@here is the result of your call: \n \n'

slack_msg += json.dumps(response)

sendSlack(slack_msg)

print("Here's your response: ")

print(response)

else:

print('[!] Request Failed')

return {

'statusCode': 200,

'body': json.dumps('Avinode Hook was executed!')

}

#New Method

def sendSlack(message):

slack_post_message_url = 'https://slack.com/api/chat.postMessage'

slack_bearer_token = 'yourOwnToken'

headers = {

'Content-Type': 'application/json',

'Authorization': 'Bearer {0}'.format(slack_bearer_token)

}

body = {

'channel': 'test2',

'text': message

}

response = requests.post(slack_post_message_url, headers=headers, json=body)

Scheduled Execution

Now, to execute your script on a regular basis Lambdas can be triggered by events, this events can be configured to be CRON expressions, this is the ultimate tool to schedule tasks and is supported natively by lambdas. Following these two tutorials from official Amazon docs we can have our function scheduled in no time.